Run the setup wizard

configure.py is an interactive CLI that generates all configuration files for you. Run it once to get started, and re-run any time you want to change settings.

①

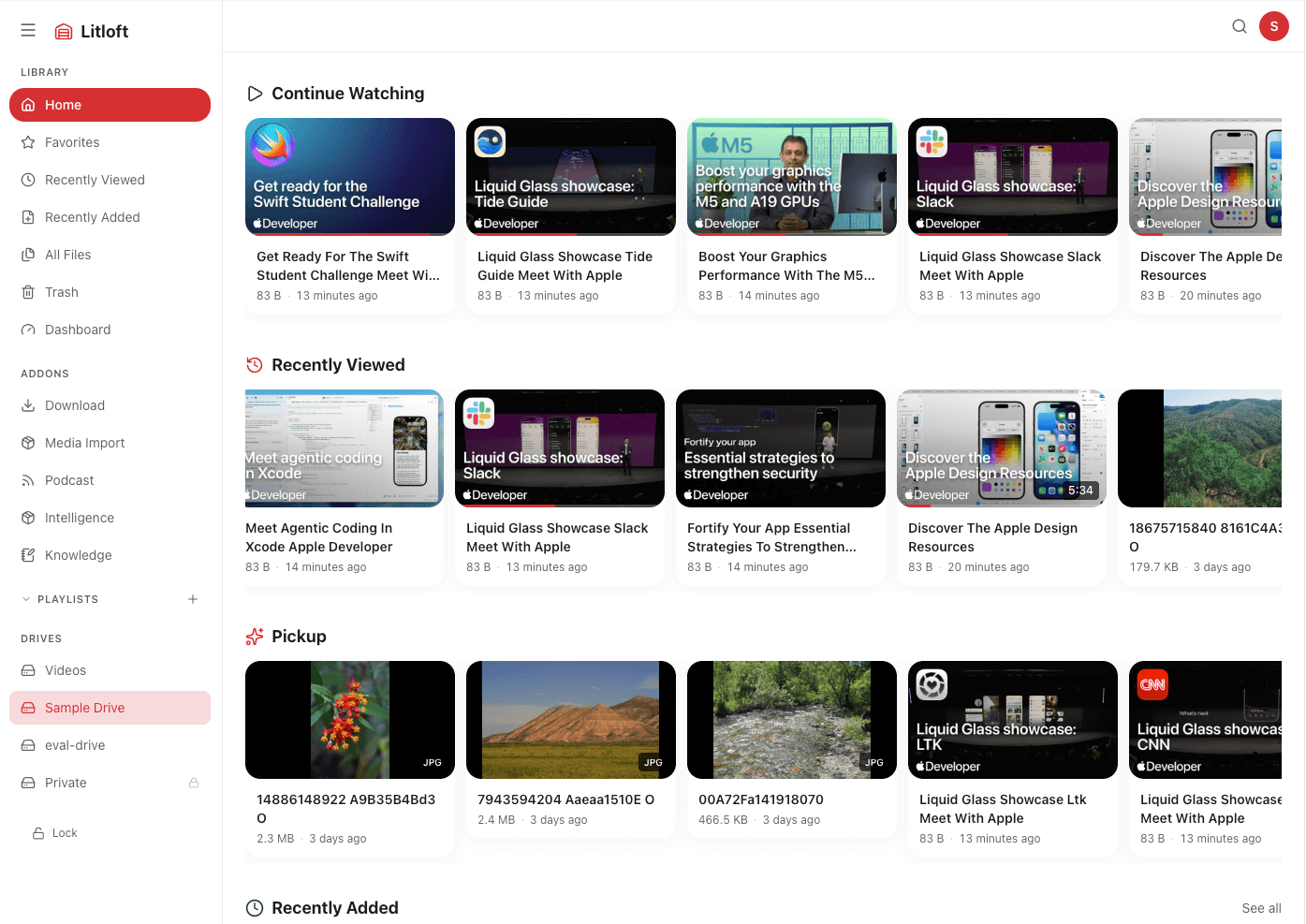

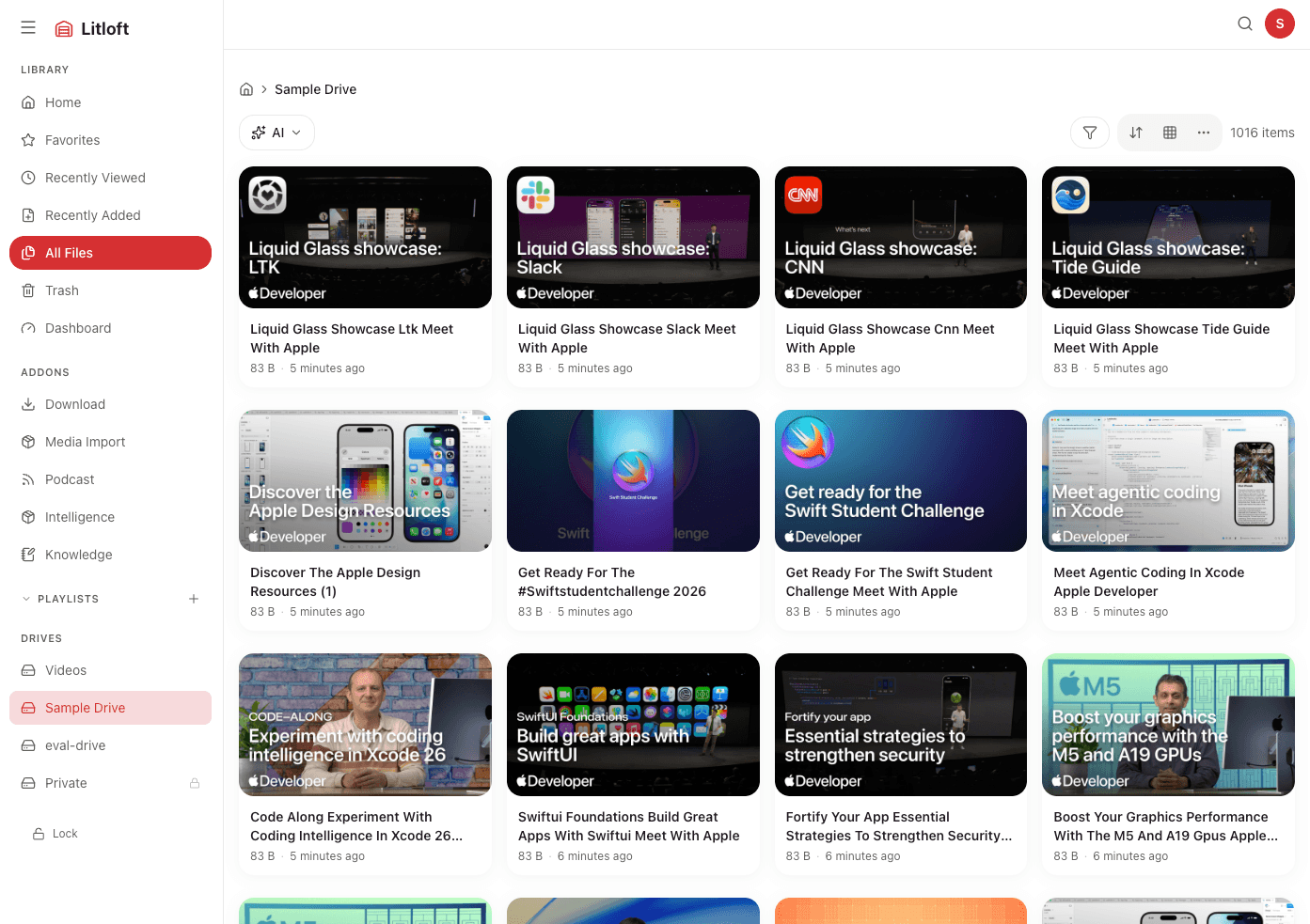

Drives

Enter the number of drives and the absolute path on your host for each one. Litloft mounts them read-only in the container.

②

Port

The default port is 3000. Change it if you need to run multiple instances or avoid a conflict.

③

Password protection optional

Assign an access group to each drive and set a password. Drives without a group are accessible to anyone on your LAN. If passwords.json is not present at all, all drives are public regardless of group settings.

④

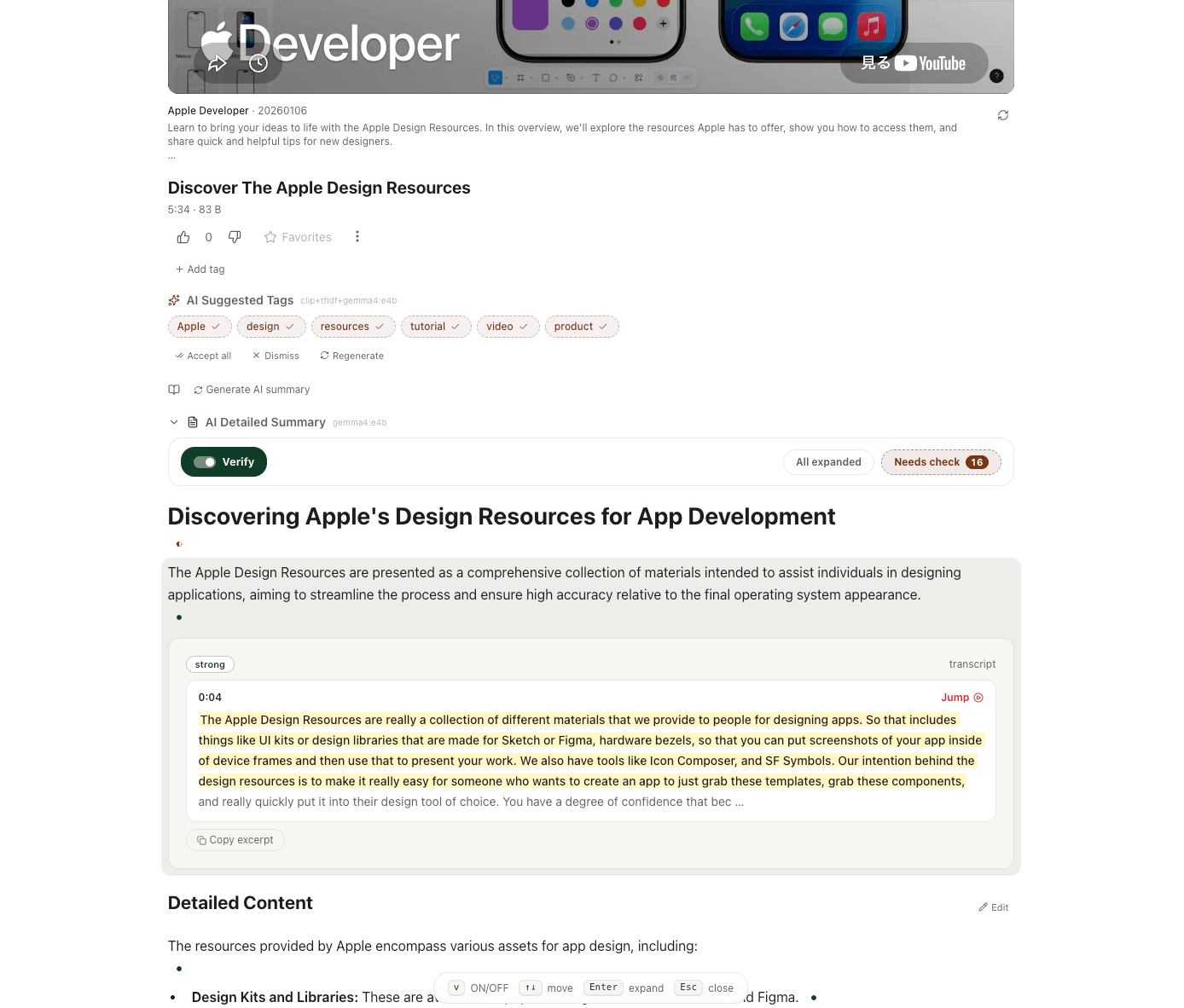

Intelligence addon optional

Choose a Whisper model for local video transcription, a text embedding model for semantic search, and an LLM provider for AI features. An LLM is required for auto-tagging, summaries, and the Ask feature — see the note below.

Whisper: small ~500 MB · turbo ~1.2 GB · large-v3 ~3 GB RAM. Indexing is CPU-intensive; embedding and transcription run in the background.

⑤

Knowledge addon optional

A linked Markdown notes vault. Secrets are auto-generated; no manual config needed.

The wizard writes docker-compose.override.yml, drives.json, passwords.json, and addons/intelligence/search-config.yml — all editable by hand later.